There are two related results that are commonly called “Gauss Lemma”. The first is that the product of primitive polynomial is still primitive. The second result is that a primitive polynomial is irreducible over a UFD (Unique Factorization Domain) D, if and only if it is irreducible over its quotient field.

Gauss Lemma: Product of primitive polynomials is primitive

If  is a unique factorization domain and

is a unique factorization domain and ![f,g\in D[x]](https://s0.wp.com/latex.php?latex=f%2Cg%5Cin+D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) , then

, then  . In particular, the product of primitive polynomials is primitive.

. In particular, the product of primitive polynomials is primitive.

Proof

(Hungerford pg 163)

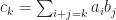

Write  and

and  with

with  ,

,  primitive. Consequently

primitive. Consequently

Hence it suffices to prove that  is primitive, that is,

is primitive, that is,  is a unit. If

is a unit. If  and

and  , then

, then  with

with  .

.

If  is not primitive, then there exists an irreducible element

is not primitive, then there exists an irreducible element  in

in  such that

such that  for all

for all  . Since

. Since  is a unit

is a unit  , hence there is a least integer

, hence there is a least integer  such that

such that

Similarly there is a least integer  such that

such that

Since  divides

divides

must divide

must divide  . Since every irreducible element in

. Since every irreducible element in  (UFD) is prime,

(UFD) is prime,  or

or  . This is a contradiction. Therefore

. This is a contradiction. Therefore  is primitive.

is primitive.

Primitive polynomials are associates in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) iff they are associates in

iff they are associates in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002)

Let  be a unique factorization domain with quotient field

be a unique factorization domain with quotient field  and let

and let  and

and  be primitive polynomials in

be primitive polynomials in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) . Then

. Then  and

and  are associates in

are associates in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) if and only if they are associates in

if and only if they are associates in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

Proof

( ) If

) If  and

and  are associates in the integral domain

are associates in the integral domain ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) , then

, then  for some unit

for some unit ![u\in F[x]](https://s0.wp.com/latex.php?latex=u%5Cin+F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) . Since the units in

. Since the units in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) are nonzero constants, so

are nonzero constants, so  , hence

, hence  with

with  and

and  . Thus

. Thus  .

.

Since  and

and  are units in

are units in  ,

,

Therefore,  for some unit

for some unit  and

and  . Consequently,

. Consequently,  (since

(since  ), hence

), hence  and

and  are associates in

are associates in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

( ) Clear, since if

) Clear, since if  for some

for some ![u\in D[x]\subseteq F[x]](https://s0.wp.com/latex.php?latex=u%5Cin+D%5Bx%5D%5Csubseteq+F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) , then

, then  and

and  are associates in

are associates in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

Primitive  is irreducible in

is irreducible in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) iff

iff  is irreducible in

is irreducible in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002)

Let  be a UFD with quotient field

be a UFD with quotient field  and

and  a primitive polynomial of positive degree in

a primitive polynomial of positive degree in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) . Then

. Then  is irreducible in

is irreducible in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) if and only if

if and only if  is irreducible in

is irreducible in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

Proof

( ) Suppose

) Suppose  is irreducible in

is irreducible in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) and

and  with

with ![g,h\in F[x]](https://s0.wp.com/latex.php?latex=g%2Ch%5Cin+F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) and

and  ,

,  . Then

. Then  and

and  with

with  and

and  ,

,  .

.

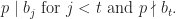

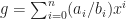

Let  and for each

and for each  let

let  If

If ![g_1=\sum_{i=0}^n a_ib_i^* x^i\in D[x]](https://s0.wp.com/latex.php?latex=g_1%3D%5Csum_%7Bi%3D0%7D%5En+a_ib_i%5E%2A+x%5Ei%5Cin+D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) (clear denominators of

(clear denominators of  by multiplying by product of denominators), then

by multiplying by product of denominators), then  with

with  ,

, ![g_2\in D[x]](https://s0.wp.com/latex.php?latex=g_2%5Cin+D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) and

and  primitive.

primitive.

Verify that  and

and  . Similarly

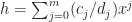

. Similarly  with

with  ,

, ![h_2\in D[x]](https://s0.wp.com/latex.php?latex=h_2%5Cin+D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) ,

,  primitive and

primitive and  . Consequently,

. Consequently,  , hence

, hence  . Since

. Since  is primitive by hypothesis and

is primitive by hypothesis and  is primitive by Gauss Lemma,

is primitive by Gauss Lemma,

This means  and

and  are associates in

are associates in  . Thus

. Thus  for some unit

for some unit  . So

. So  , hence

, hence  and

and  are associates in

are associates in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) . Consequently

. Consequently  is reducible in

is reducible in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) (since

(since  ), which is a contradiction. Therefore,

), which is a contradiction. Therefore,  is irreducible in

is irreducible in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

( ) Conversely if

) Conversely if  is irreducible in

is irreducible in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) and

and  with

with ![g,h\in D[x]](https://s0.wp.com/latex.php?latex=g%2Ch%5Cin+D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) , then one of

, then one of  ,

,  (say

(say  ) is a unit in

) is a unit in ![F[x]](https://s0.wp.com/latex.php?latex=F%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) and thus a (nonzero) constant. Thus

and thus a (nonzero) constant. Thus  . Since

. Since  is primitive,

is primitive,  must be a unit in

must be a unit in  and hence in

and hence in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) . Thus

. Thus  is irreducible in

is irreducible in ![D[x]](https://s0.wp.com/latex.php?latex=D%5Bx%5D&bg=ffffff&fg=1a1a1a&s=0&c=20201002) .

.

is a PID, then every finitely generated module

over

is isomorphic to a direct sum of cyclic

-modules. That is, there is a unique decreasing sequence of proper ideals

such that

where

, and

.

over a graded PID

decomposes uniquely into the form

where

are homogenous elements such that

,

, and

denotes an

-shift upward in grading.